S3 Web Console Multi-File downloader – a simple to install Bookmarklet

If you are looking for a simple solution to download multiple files from the AWS S3 Web Console look no

If you are looking for a simple solution to download multiple files from the AWS S3 Web Console look no

re:Invent is the Super Bowl of Cloud Computing! This week long super conference focused around Amazon Web Services delivers a

I am excited to announce I will be presenting at the 2024 AWS Summit Washington, DC. The AWS DC Summit

I am excited to announce I will be presenting at the 2023 AWS Summit Washington, DC. The AWS DC Summit

If you get the following error running PHP using the AWS SDK for PHP, then this post is for you!

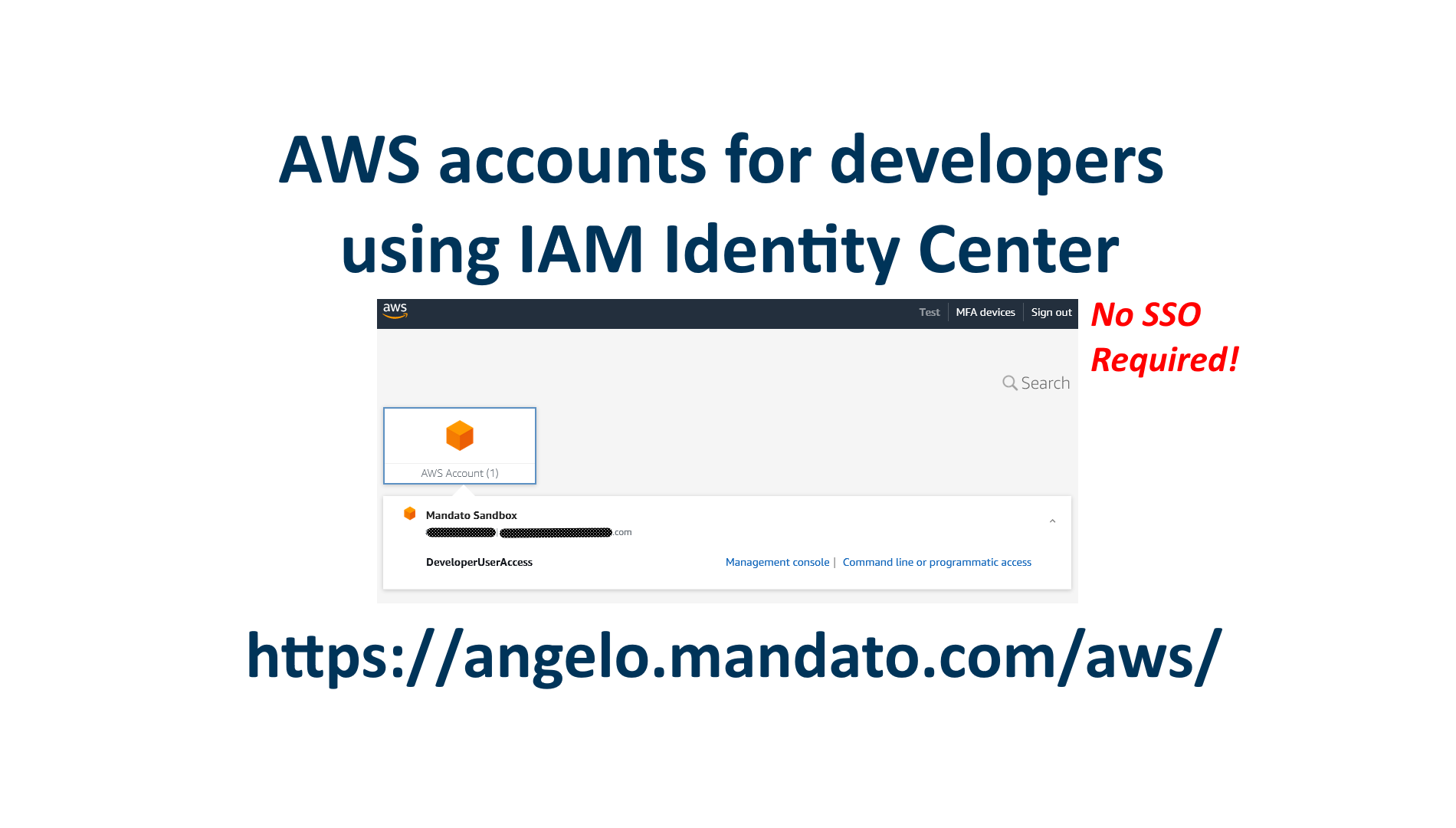

Learn how to easily setup IAM Identity Center to manage logins for developers to access AWS accounts for test and production environments. Developers can then sign in to the console or obtain temporary keys for programmatic access.

Files stored in AWS S3 can be redirected to specific URLs. By utilizing the S3 static website feature, a special

Creating a Pre Business Plan is the first step to start the process of validating your idea. Why create a

Subscribe: Apple Podcasts | More Options

Redirect URLs with specific path prefixes or HTTP error codes on AWS using S3 static website JSON Redirection Rules feature.

Welcome to the Cloud Entrepreneur Podcast! The Cloud Entrepreneur Podcast provides FREE resources to plan, organize and grow your software

Subscribe: Apple Podcasts | More Options

Run API Gateway locally to develop and test before deploying into the AWS environment.

Redirect an entire website using Amazon Web Services maintenance free serverless resources.

Get the latest news and episodes of the Cloud Entrepreneur Podcast and Angelo’s development blog directly in your inbox!